PRTG Manual: Failover Cluster Configuration

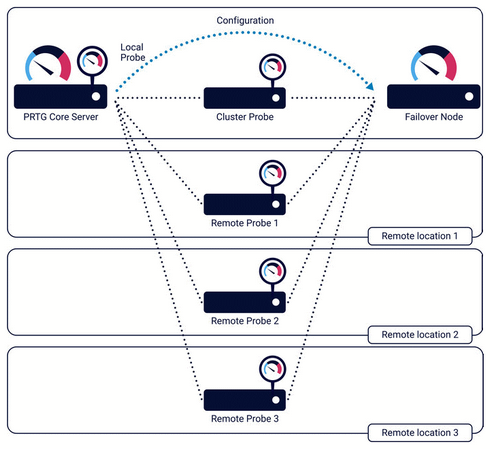

A failover cluster consists of two or more PRTG core servers that work together to form a high availability monitoring system. PRTG offers the single failover cluster (one master node and one failover node) in all licenses, including the freeware edition.

This feature is not available in PRTG Hosted Monitor.

For more information about clusters in general, see section Failover Cluster.

Consider the following notes about clusters:

- You need two target systems that run any supported Windows version. The target systems can be physical machines or virtual machines (VM). For more information, see section System Requirements.

- The machines must be up and running.

- The machines must be similar in regard to the system performance and speed (like CPU, RAM, etc.).

- In a cluster, each of the cluster nodes individually monitors the devices that you add to the cluster probe. This means that the monitoring load increases with every cluster node. Make sure that your devices and your network can handle these additional requests. Often, a longer scanning interval for your entire monitoring setup is a good idea. For example, set a scanning interval of five minutes in the root group's settings.

- We recommend that you install PRTG on dedicated, physical machines for best performance.

- Keep in mind that a machine that runs a cluster node might automatically restart without prior notice, for example, because of special software updates.

- Both machines must be visible for each other through the network.

- Communication between the two machines must be possible in both directions. Make sure that no software or hardware firewall blocks communication. All communication between cluster nodes is directed through one specific Transmission Control Protocol (TCP) port. You define the port during the cluster setup. By default, it is TCP port 23570.

- In a cluster, a Domain Name System (DNS) name that you enter under Setup | System Administration | User Interface in the PRTG web interface is only used in links that point to the master node. You cannot enter a DNS name for a failover node. This means that any HTTP or HTTPS links that point to a failover node (for example, in notifications or in maps) always point to the failover node's IP address in your local network and might therefore not be reachable from external networks or from the internet, particularly if you use network address translation (NAT) rules.

- Email notifications for failover: The failover master node sends notifications if the primary master node is not connected to the cluster. To make sure that PRTG can deliver emails in this case, configure the notification delivery settings so that PRTG can use them to deliver emails from your failover node as well. For example, use the option to set up a secondary Simple Mail Transfer Protocol (SMTP) email server. This fallback server must be available for the failover master node so that it can send emails over it independently from the first email server.

- Make your machines secure. Every cluster node has full access to all stored credentials, other configuration data, and the monitoring results of the cluster. Also, PRTG software updates can be deployed from every cluster node. So, make sure you take security precautions to avoid security attacks like hackers and Trojans. Secure every cluster node as carefully as the master node.

- Run cluster nodes either on 32-bit or 64-bit Windows versions only. Avoid using both 32-bit and 64-bit versions in the same cluster. This configuration is not supported and might result in an unstable system. Also, ZIP compression for the cluster communication is disabled and you might encounter higher network traffic between your cluster nodes.

- If you run cluster nodes on Windows systems with different time zone settings and you use schedules to pause monitoring of sensors, the schedules apply at the local time of each cluster node. Because of this, the overall status of a particular sensor is shown as Paused every time the schedule matches a cluster node's local system time. Use the same time zone setting on each Windows system with a cluster node to avoid this behavior.

- The password for the PRTG System Administrator user account is not automatically synchronized on cluster nodes. The default credentials (prtgadmin) for the PRTG System Administrator user account do not work on the failover node. For more information, see the Knowledge Base: I cannot log in to my failover node anymore. What can I do?

- Stay below 2,500 sensors per cluster for best performance in a single failover. Clusters with more than 5,000 sensors are not officially supported. For each additional failover node, divide the number of sensors by two.

In cluster mode, you cannot use sensors that wait for data to be received. Because of this, you can use the following sensors only on a local probe or remote probe:

- DHCP

- HTTP IoT Push Data Advanced

- HTTP Push Count

- HTTP Push Data

- HTTP Push Data Advanced

- IPFIX and IPFIX (Custom)

- jFlow v5 and jFlow v5 (Custom)

- NetFlow v5 and NetFlow v5 (Custom)

- NetFlow v9 and NetFlow v9 (Custom)

- Packet Sniffer and Packet Sniffer (Custom)

- sFlow and sFlow (Custom)

- SNMP Trap Receiver

- Syslog Receiver

PRTG provides cluster support for classic remote probes. This means that all of your classic remote probes can connect to all of your cluster nodes. Because of this, you can still see the monitoring data of classic remote probes and sensor warnings and errors even when your master node fails.

You cannot add multi-platform probes to a cluster. For more information about the multi-platform probe, see the manual: Multi-Platform Probe for PRTG.

Consider the following notes about clusters with classic remote probes:

- You must allow remote probe connections to your failover nodes. To do so, log in to each system in your cluster and open the PRTG Administration Tool. On the PRTG Core Server tab, accept connections from remote probes on each cluster node.

- If you use remote probes outside your local network: You must use IP addresses or Domain Name System (DNS) names for your cluster nodes that are valid for both the cluster nodes to reach each other and for remote probes to reach all cluster nodes individually. Open the Cluster settings and adjust the entries for cluster nodes accordingly so that these addresses are reachable from the outside. New remote probes try to connect to these addresses but cannot reach cluster nodes that use private addresses.

- If you use network address translation (NAT) with remote probes outside the NAT: You must use IP addresses or DNS names for your cluster nodes that are reachable from the outside. If your cluster nodes are inside the NAT and the cluster configuration only contains internal addresses, your remote probes from outside the NAT are not able to connect. The PRTG core server must be reachable under the same address for both other cluster nodes and remote probes.

- A remote probe only connects to the PRTG core server with the defined IP address when it starts.

This PRTG core server must be the primary master node.

- Initially, remote probes are not visible on failover nodes. You need to set their Cluster Connectivity first in the Administrative Probe Settings for them to be visible and to work with all cluster nodes. Select Remote probe sends data to all cluster nodes for each remote probe that you want to connect to all cluster nodes.

- Newly connected remote probes are visible and work with all cluster nodes immediately after you acknowledge the probe connection. The connectivity setting Remote probe sends data to all cluster nodes is default for new remote probes.

- As soon as you activate a remote probe for all cluster nodes, it automatically connects to the correct IP addresses and ports of all cluster nodes.

- Once a remote probe has connection data from the primary master node, it can connect to all other cluster nodes also when the primary master node fails.

- Changes that you make in the connection settings of cluster nodes are automatically sent to the remote probes.

- If a PRTG core server (cluster node) in your cluster is not running, the remote probes deliver monitoring data after the PRTG core server restarts. This happens individually for each PRTG core server in your cluster.

- If you enable cluster connectivity for a remote probe, it does not deliver monitoring data from the past when cluster connectivity was disabled. For sensors that use difference values, the difference between the current value and the last value is shown with the first new measurement (if the respective sensor previously sent values to the PRTG core server).

- Except for this special case, all PRTG core servers show the same values for sensors on devices that you add to the cluster probe.

- The PRTG core server that is responsible for the configuration and management of a remote probe is always the current master node. This means that only the current master node performs all tasks of the PRTG core server. If you use a split cluster with several master nodes, only the master node that appears first in the cluster configuration is responsible.

You can use remote probes in a cluster as described above, which is showing monitoring data of all remote probes on all cluster nodes. However, you cannot cluster a remote probe itself. To ensure gapless monitoring for a specific remote probe, install a second remote probe on a machine in your network next to the remote probe. Then create all devices and sensors of the original remote probe on the second remote probe by cloning the devices from the original remote probe, for example. The second remote probe is then a copy of the first remote probe and you can still monitor the desired devices if the original remote probe fails.

Remote probes that send data to all cluster nodes result in increased bandwidth usage. Select Remote probe sends data only to primary master node in the probe settings for one or more remote probes to lower bandwidth usage if necessary.

Explicitly check on each cluster node if a remote probe is connected. PRTG does not notify you if a remote probe is disconnected from a cluster node. For example, log in to the PRTG web interface on a cluster node and check in the device tree if your remote probes are connected.

KNOWLEDGE BASE

What is the clustering feature in PRTG?

What are the bandwidth requirements for running a cluster?

What is a failover master node and how does it behave?

I need help with my cluster configuration. Where do I find step-by-step instructions?

Cluster: How do I convert a (temporary) failover master node to be the primary master node?

Are there alternatives to the cluster when running a large installation?

I cannot log in to my failover node anymore. What can I do?

OTHER MANUALS

Multi-Platform Probe for PRTG (PDF)

PAESSLER WEBSITE

How to connect PRTG through a firewall in 4 steps

How to set up a failover cluster in PRTG in 6 steps