PRTG Manual: Failover Cluster

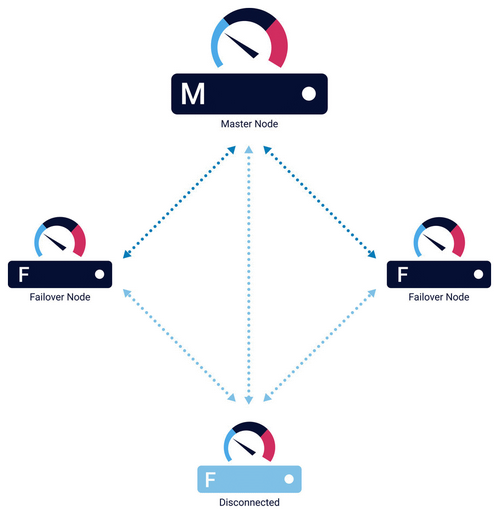

A cluster consists of two or more PRTG core servers that work together to form a high-availability monitoring system.

This feature is not available in PRTG Hosted Monitor.

A cluster consists of at least two cluster nodes: one master node and one or more failover nodes, where up to four failover nodes are possible. Each cluster node is a full PRTG core server installation that can perform all of the monitoring and alerting on its own.

See the following table for more information on how a cluster works:

Feature |

Description |

|---|---|

Connection and communication |

Cluster nodes are connected to each other with two TCP/IP connections. They communicate in both directions and a single cluster node only needs to connect to one other cluster node to integrate itself into the cluster. |

Object configuration |

During normal operation, you configure devices, sensors, and all other monitoring objects on the master node. The master node automatically distributes the configuration among all other cluster nodes in real time. |

Fail-safe monitoring |

All devices that you create on the cluster probe are monitored by all cluster nodes, so data from different perspectives is available and monitoring continues even if one of the cluster nodes fails. If the master node fails, one of the failover nodes takes over and controls the cluster until the master node is back. This ensures continuous data collection. |

Active-active mode |

A cluster works in active-active mode. This means that all cluster nodes permanently monitor the network according to the common configuration that they receive from the master node. Each cluster node stores the results in its own database. PRTG also distributes the storage of monitoring results among the cluster. |

PRTG updates |

You only need to install PRTG updates on one cluster node. This cluster node automatically deploys the new version to all other cluster nodes. |

Notification handling |

If one or more cluster nodes discover downtime or threshold breaches, only one installation, either the primary master node or the failover master node, sends out notifications, for example, via email, SMS text message, or push message. Because of this, there is no notification flood from all cluster nodes in case of failures. |

Data gaps |

During the outage of a cluster node, it cannot collect monitoring data. The data of this single cluster node shows gaps. However, monitoring data for the time of the outage is still available on the other cluster nodes.

|

Monitoring results review |

Because the monitoring configuration is centrally managed, you can only change it on the primary master node. However, you can review the monitoring results of any of the failover nodes in read-only mode if you log in to the PRTG web interface. |

Remote probes |

If you use classic remote probes in a cluster, each remote probe connects to each cluster node and sends the data to all cluster nodes. You can define the Cluster Connectivity of each remote probe in its settings.

|

Performance Considerations for Clusters

Monitoring traffic and load on the network is multiplied by the number of used cluster nodes. Furthermore, the devices on the cluster probe are monitored by all cluster nodes, so the monitoring load increases on these devices as well.

For most usage scenarios, this does not pose a problem, but always keep in mind the system requirements. As a rule of thumb, each additional cluster node means that you need to divide the number of sensors that you can use by two.

PRTG does not officially support more than 5,000 sensors per cluster. Contact the Paessler Presales team if you exceed this limit. For possible alternatives to a cluster, see the Knowledge Base: Are there alternatives to the cluster when running a large installation?

For more information, see section Failover Cluster Configuration.

KNOWLEDGE BASE

What is the clustering feature in PRTG?

In which web interface do I log in to if the master node fails?

What are the bandwidth requirements for running a cluster?

Are there alternatives to the cluster when running a large installation?

PAESSLER WEBSITE

How to connect PRTG through a firewall in 4 steps

VIDEO TUTORIAL

How to set up a PRTG cluster